Insights

Shaping product direction through executive collaboration

Role: Design Lead (Strategic Contributor)

Scope: Concept development, validation strategy, direction setting, executive collaboration

Context: Early-stage initiative with CPO & CTO

Problem Space

xMatters was evolving beyond alert management toward operational intelligence. Leadership identified an opportunity to introduce Insights — a strategic analytics surface intended to help teams interpret incident patterns, systemic signals, and risk trends.

However:

- The value proposition was broad

- The primary persona was unclear

- The interaction model was undefined

- Assumptions about customer needs were untested

The risk was not feasibility. The risk was investing in the wrong abstraction.

Strategic Context

I was invited into working sessions with the CPO and CTO to shape this initiative from its earliest stage.

Rather than designing screens immediately, my role was to:

- Translate strategic ambition into tangible models

- Surface assumptions early

- Clarify tradeoffs before engineering investment

- Structure ambiguity into decision-ready options

At this stage, the opportunity space was wide.

Concept Exploration

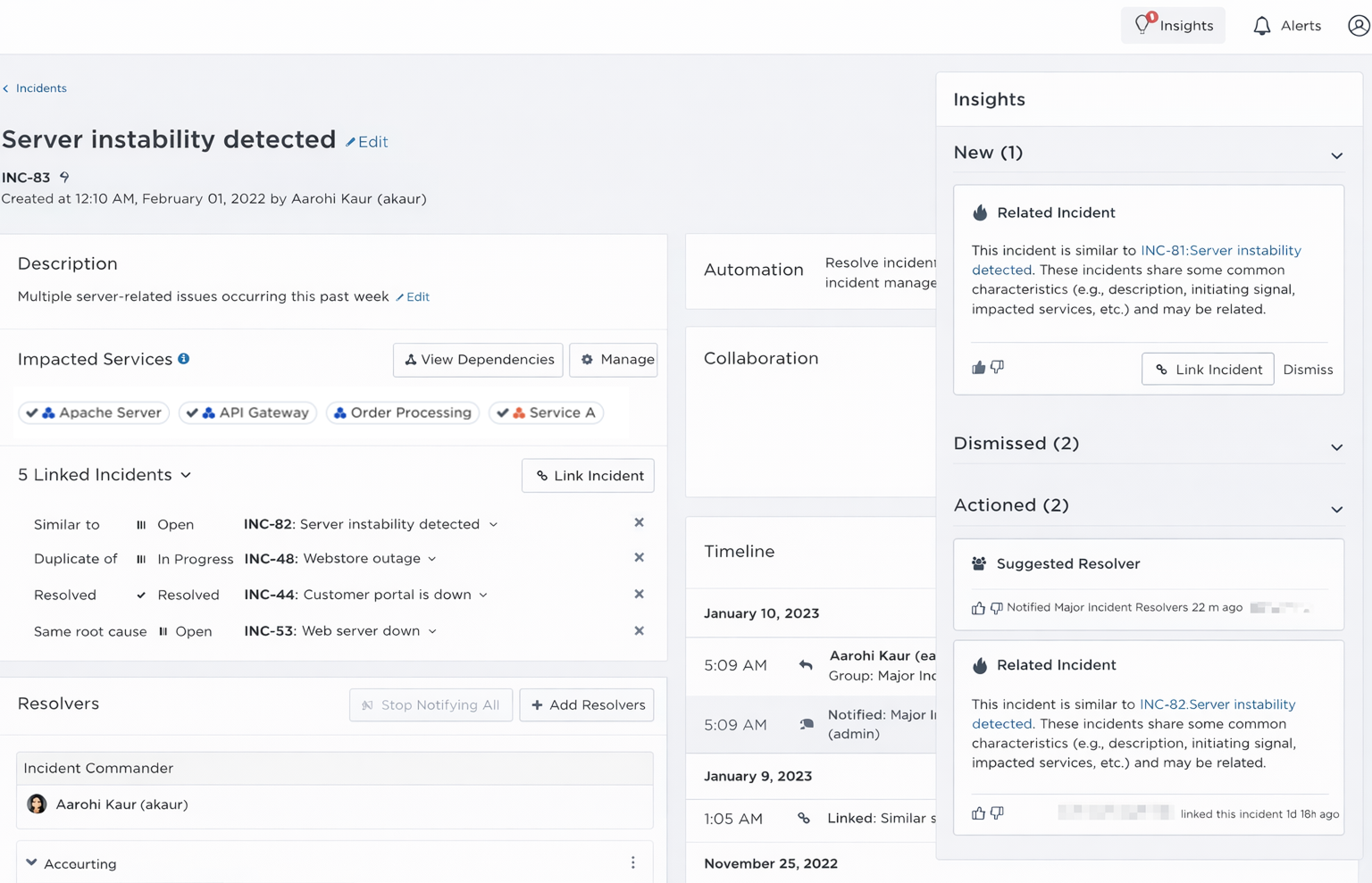

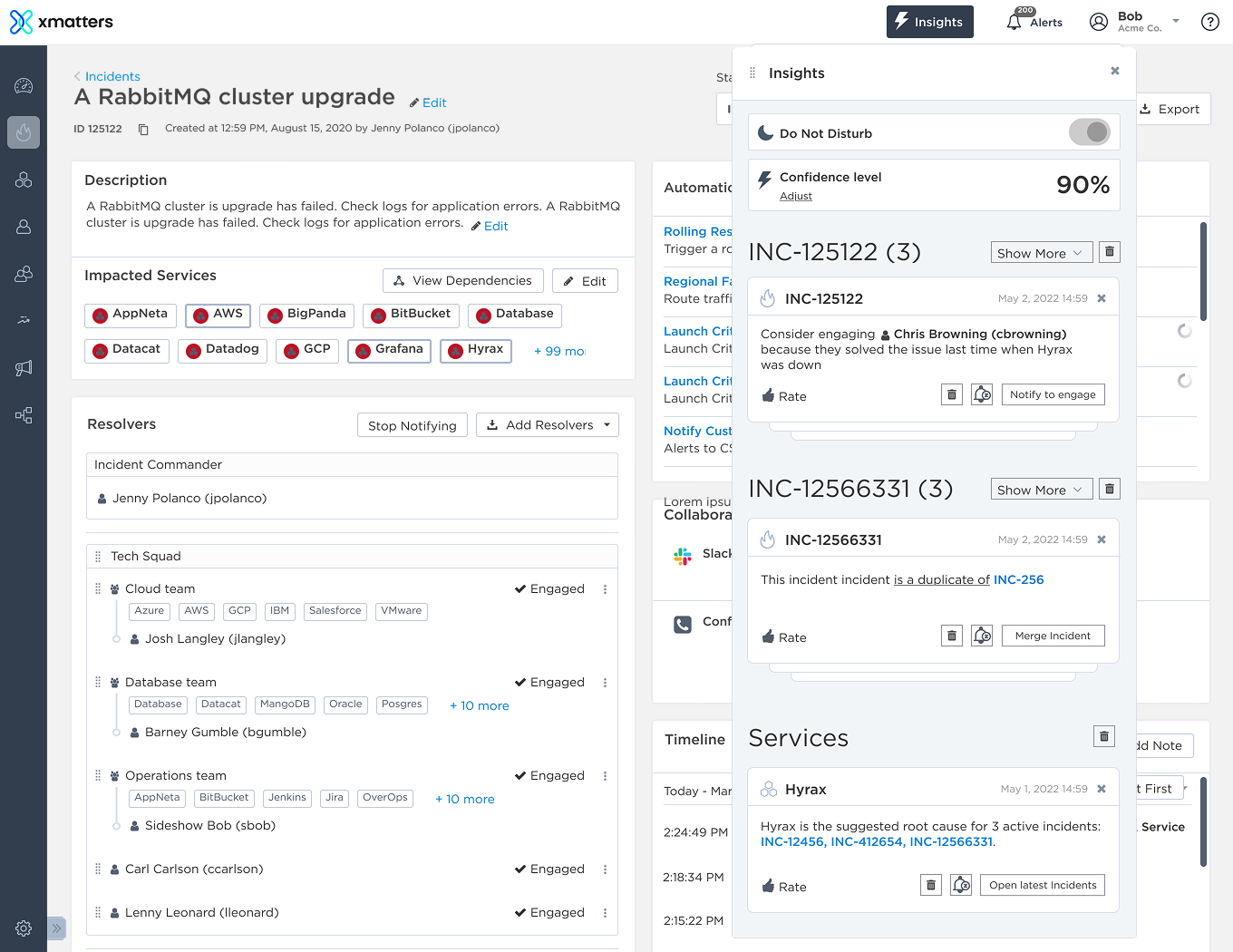

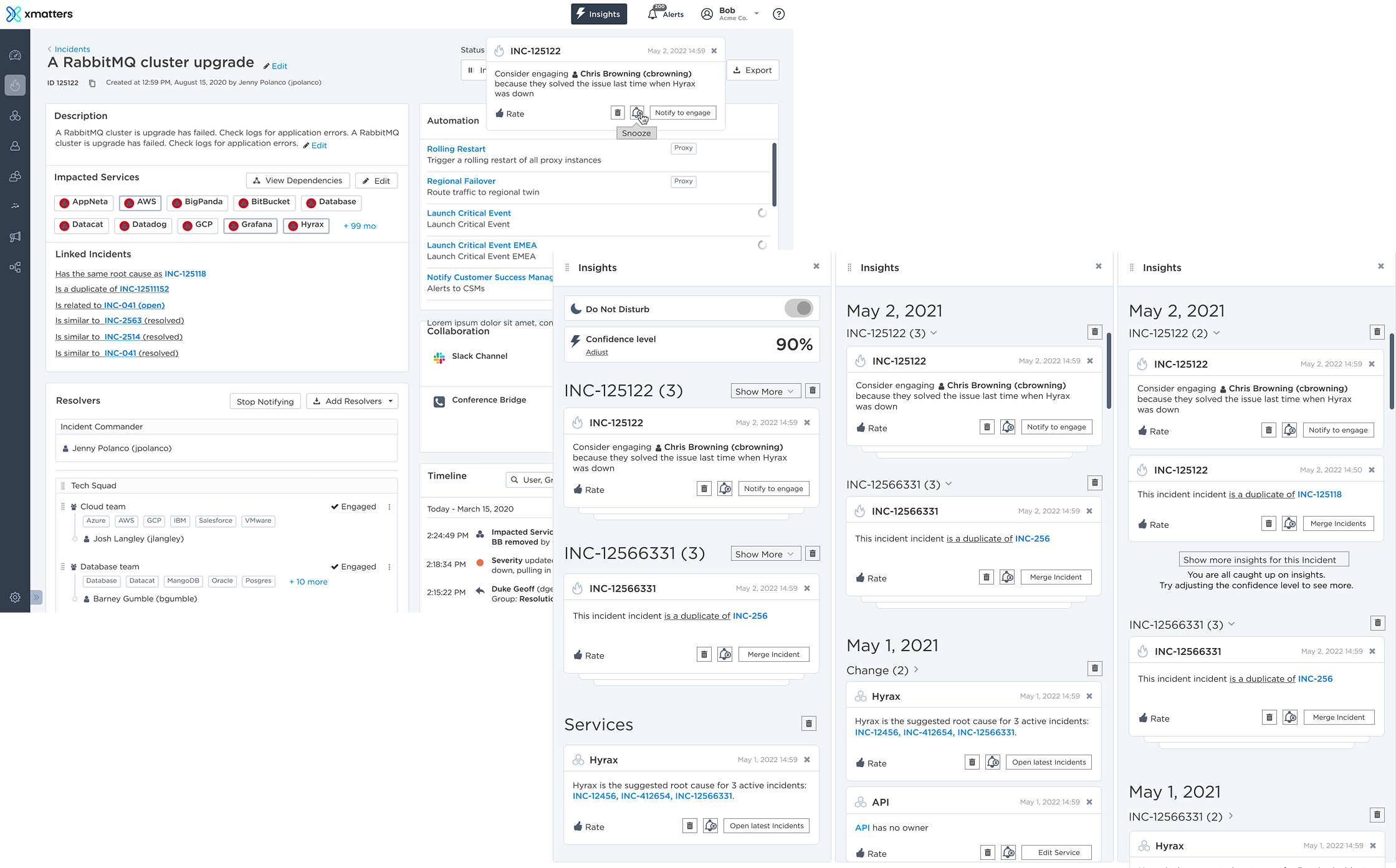

To clarify direction, I created multiple conceptual models for how Insights could function. Early explorations included:

- Incident clustering views (grouped by date, change, or service)

- Duplicate detection surfacing inside incidents

- Suggested root cause relationships

- Confidence scoring indicators

- Service-level pattern aggregation

- Timeline-integrated insights panels

These mocks helped leadership see:

- Whether Insights lived inside incidents or as a standalone surface

- Whether it should emphasize aggregation or recommendation

- How proactive vs reactive it should feel

One direction emerged as the strongest candidate.

Approach

Strategic Reframe

As exploration progressed, the core question shifted. This was not about building a dashboard. It was about defining:

- What decision Insights was meant to support

- Who the true consumer was

- Whether this was operational tooling or executive reporting

- What differentiated it from existing analytics

This reframing shifted the team from layout thinking to product positioning.

Defining the Insight Model

The deeper challenge became: What qualifies as an insight?

We tested and refined:

- Categories of insights

- Grouping and aggregation logic

- Linked incident relationships

- Confidence scoring and trust thresholds

- The balance between summary and contextual depth

Rather than polishing UI, we were defining the ontology of Insights.

Define Scalable Design Patterns

Before committing significant engineering investment, I recommended validating critical assumptions with customers. I translated open questions into:

- Clear hypotheses

- Interactive prototypes

- A structured research script aligned to decision criteria

We tested:

- Individual insight types

- Category logic and grouping

- Linked incident clarity

- Card-level interaction patterns

- Trust calibration around confidence indicators

- Embedded vs standalone placement

Rather than validating aesthetics, we validated positioning and value.

Leadership Through Structure

Throughout the initiative, I:

- Clarified executive assumptions

- Structured exploration into testable models

- Defined validation criteria prior to acceleration

- Prepared research assets and prototypes for continuity

Before my sabbatical, I handed the initiative off to two junior designers with:

- Defined hypotheses

- Structured prototypes

- Clear validation goals

This ensured momentum without ambiguity.

Outcome

- Reduced strategic risk before major investment

- Clarified the role and scope of Insights within the product ecosystem

- Aligned executive ambition with customer validation

- Established a structured continuation plan

This project reinforced that executive-facing design requires defining signal, trust, and decision clarity in ambiguous spaces.

Daria Ershova

Insights

Shaping product direction through executive collaboration

Role: Design Lead (Strategic Contributor)

Scope: Concept development, validation strategy, direction setting, executive collaboration

Context: Early-stage initiative with CPO & CTO

Problem Space

xMatters was evolving beyond alert management toward operational intelligence. Leadership identified an opportunity to introduce Insights — a strategic analytics surface intended to help teams interpret incident patterns, systemic signals, and risk trends.

However:

- The value proposition was broad

- The primary persona was unclear

- The interaction model was undefined

- Assumptions about customer needs were untested

The risk was not feasibility. The risk was investing in the wrong abstraction.

Strategic Context

I was invited into working sessions with the CPO and CTO to shape this initiative from its earliest stage.

Rather than designing screens immediately, my role was to:

- Translate strategic ambition into tangible models

- Surface assumptions early

- Clarify tradeoffs before engineering investment

- Structure ambiguity into decision-ready options

At this stage, the opportunity space was wide.

Concept Exploration

To clarify direction, I created multiple conceptual models for how Insights could function. Early explorations included:

- Incident clustering views (grouped by date, change, or service)

- Duplicate detection surfacing inside incidents

- Suggested root cause relationships

- Confidence scoring indicators

- Service-level pattern aggregation

- Timeline-integrated insights panels

These mocks helped leadership see:

- Whether Insights lived inside incidents or as a standalone surface

- Whether it should emphasize aggregation or recommendation

- How proactive vs reactive it should feel

One direction emerged as the strongest candidate.

Approach

Strategic Reframe

As exploration progressed, the core question shifted. This was not about building a dashboard. It was about defining:

- What decision Insights was meant to support

- Who the true consumer was

- Whether this was operational tooling or executive reporting

- What differentiated it from existing analytics

This reframing shifted the team from layout thinking to product positioning.

Defining the Insight Model

The deeper challenge became: What qualifies as an insight?

We tested and refined:

- Categories of insights

- Grouping and aggregation logic

- Linked incident relationships

- Confidence scoring and trust thresholds

- The balance between summary and contextual depth

Rather than polishing UI, we were defining the ontology of Insights.

Define Scalable Design Patterns

Before committing significant engineering investment, I recommended validating critical assumptions with customers. I translated open questions into:

- Clear hypotheses

- Interactive prototypes

- A structured research script aligned to decision criteria

We tested:

- Individual insight types

- Category logic and grouping

- Linked incident clarity

- Card-level interaction patterns

- Trust calibration around confidence indicators

- Embedded vs standalone placement

Rather than validating aesthetics, we validated positioning and value.

Leadership Through Structure

Throughout the initiative, I:

- Clarified executive assumptions

- Structured exploration into testable models

- Defined validation criteria prior to acceleration

- Prepared research assets and prototypes for continuity

Before my sabbatical, I handed the initiative off to two junior designers with:

- Defined hypotheses

- Structured prototypes

- Clear validation goals

This ensured momentum without ambiguity.

Outcome

- Reduced strategic risk before major investment

- Clarified the role and scope of Insights within the product ecosystem

- Aligned executive ambition with customer validation

- Established a structured continuation plan

This project reinforced that executive-facing design requires defining signal, trust, and decision clarity in ambiguous spaces.

Daria Ershova

Insights

Shaping product direction through executive collaboration

Role: Design Lead (Strategic Contributor)

Scope: Concept development, validation strategy, direction setting, executive collaboration

Context: Early-stage initiative with CPO & CTO

Problem Space

xMatters was evolving beyond alert management toward operational intelligence. Leadership identified an opportunity to introduce Insights — a strategic analytics surface intended to help teams interpret incident patterns, systemic signals, and risk trends.

However:

- The value proposition was broad

- The primary persona was unclear

- The interaction model was undefined

- Assumptions about customer needs were untested

The risk was not feasibility. The risk was investing in the wrong abstraction.

Strategic Context

I was invited into working sessions with the CPO and CTO to shape this initiative from its earliest stage.

Rather than designing screens immediately, my role was to:

- Translate strategic ambition into tangible models

- Surface assumptions early

- Clarify tradeoffs before engineering investment

- Structure ambiguity into decision-ready options

At this stage, the opportunity space was wide.

Concept Exploration

To clarify direction, I created multiple conceptual models for how Insights could function. Early explorations included:

- Incident clustering views (grouped by date, change, or service)

- Duplicate detection surfacing inside incidents

- Suggested root cause relationships

- Confidence scoring indicators

- Service-level pattern aggregation

- Timeline-integrated insights panels

These mocks helped leadership see:

- Whether Insights lived inside incidents or as a standalone surface

- Whether it should emphasize aggregation or recommendation

- How proactive vs reactive it should feel

One direction emerged as the strongest candidate.

Approach

Strategic Reframe

As exploration progressed, the core question shifted. This was not about building a dashboard. It was about defining:

- What decision Insights was meant to support

- Who the true consumer was

- Whether this was operational tooling or executive reporting

- What differentiated it from existing analytics

This reframing shifted the team from layout thinking to product positioning.

Defining the Insight Model

The deeper challenge became: What qualifies as an insight?

We tested and refined:

- Categories of insights

- Grouping and aggregation logic

- Linked incident relationships

- Confidence scoring and trust thresholds

- The balance between summary and contextual depth

Rather than polishing UI, we were defining the ontology of Insights.

Structured Validation

Before committing significant engineering investment, I recommended validating critical assumptions with customers. I translated open questions into:

- Clear hypotheses

- Interactive prototypes

- A structured research script aligned to decision criteria

We tested:

- Individual insight types

- Category logic and grouping

- Linked incident clarity

- Card-level interaction patterns

- Trust calibration around confidence indicators

- Embedded vs standalone placement

Rather than validating aesthetics, we validated positioning and value.

Leadership Through Structure

Throughout the initiative, I:

- Clarified executive assumptions

- Structured exploration into testable models

- Defined validation criteria prior to acceleration

- Prepared research assets and prototypes for continuity

Before my sabbatical, I handed the initiative off to two junior designers with:

- Defined hypotheses

- Structured prototypes

- Clear validation goals

This ensured momentum without ambiguity.

Outcome

- Reduced strategic risk before major investment

- Clarified the role and scope of Insights within the product ecosystem

- Aligned executive ambition with customer validation

- Established a structured continuation plan

This project reinforced that executive-facing design requires defining signal, trust, and decision clarity in ambiguous spaces.